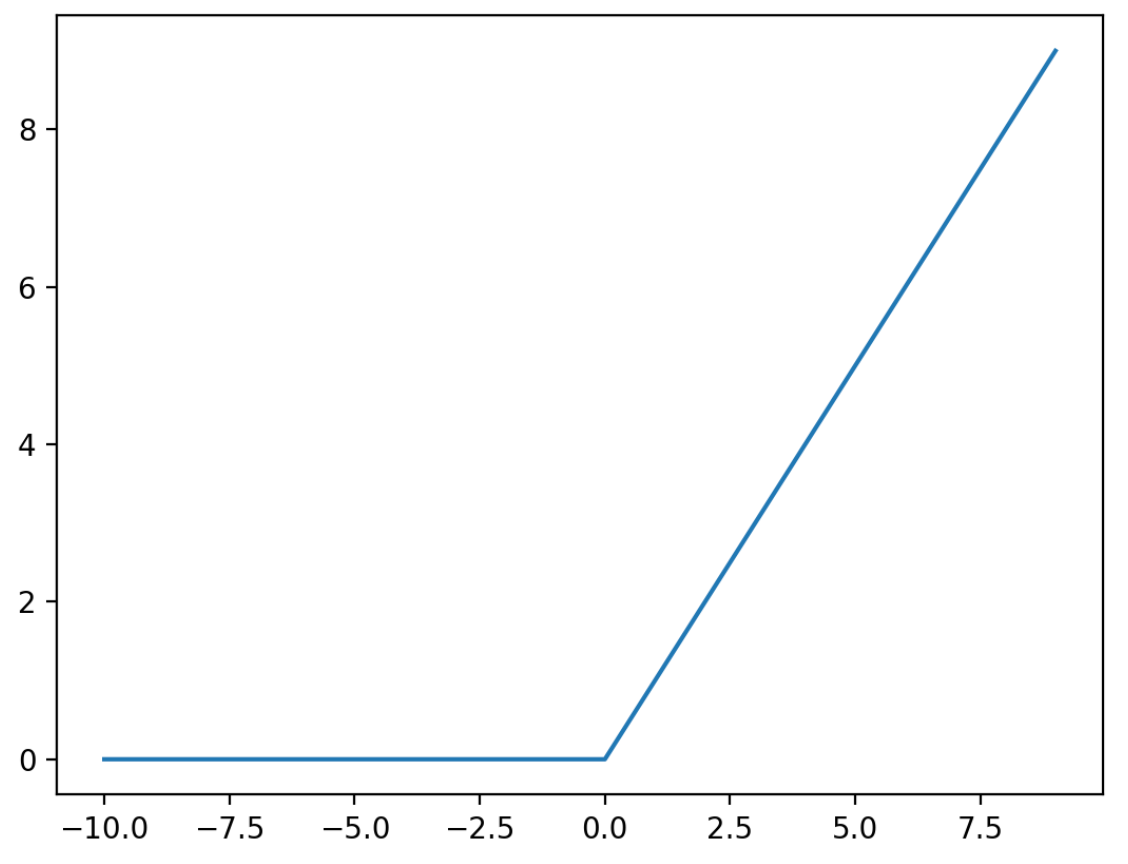

Rectified Linear Units, or ReLUs, are a type of activation function that are linear in the positive dimension, but zero in the negative dimension. The kink in the function is the source of the non-linearity. Linearity in the positive dimension ha the attractive property that it prevents non-saturation of gradients (contrast with sigmoid), although for half of the real line its gradient is zero.

References